|

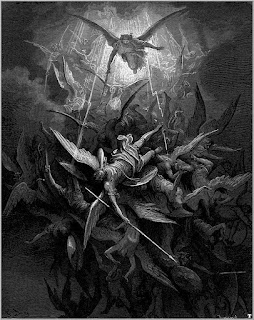

| Gustave Doré: Illustration for Paradise Lost |

(Updated 17 Dec 2022)

As children, we grow up with stories of the battle between good and evil, but good ultimately triumphs. In adulthood, we know things can be more complicated: bad people can get into positions of power and make everyone suffer. And yet, we tell ourselves, we have a strong legal framework, there are checks and balances, and a political system aspires to be free and fair.

During the last decade, I started for the first time to have serious doubts about those assumptions. In both the UK and the US, the same pattern is seen repeatedly: the media report on a scandal involving the government or a public figure, there is a brief period of public outrage, but then things continue as before.

In the UK we have become accustomed to politicians lying to Parliament and failing to correct the record, to bullying by senior politicians, and to safety regulations being ignored. The current scandal is a case of disaster capitalism where government cronies made vast fortunes from the Covid pandemic by gaining contracts for personal protective equipment – which was not only provided at inflated prices, but then could not be used as it was substandard.

These are all shocking stories, but even more shocking is the lack of any serious consequences for those who are guilty. In the past, politicians would have resigned for minor peccadilloes, with pressure from the Prime Minister if need be. During Boris Johnson’s premiership, however, the Prime Minister was part of the problem.

During the Trump presidency in the US, Sarah Kendzior wrote about “saviour syndrome” - the belief people had that someone would come along and put things right. As she noted: “Mr. Trump has openly committed crimes and even confessed to crimes: What is at stake is whether anyone would hold him accountable.” And, sadly, the answer has been no.

No consequences for scientific fraud

So what has this got to do with science? Well, I get the same sinking feeling that there is a major problem, everyone can see there's a problem, but nobody is going to rescue us. Researchers who engage in obvious malpractice repeatedly get away with no consequences. This has been a recurring theme from those who have exposed academic papermills (Byrne et al., 2021) and/or reported manipulation of figures in journal articles (Bik et al., 2016). For instance, when Bik was interviewed by Nature, she noted that 60-70% of the 800 papers she had reported to journals had not been dealt with within 5 years. That matches my more limited experience; if one points out academic malpractice to publishers or institutions, there is often no reply. Those who do reply typically say they will investigate, but then you hear no more.

At a recent symposium on Research Integrity at Liverpool Medical Institution*, David Sanders (Purdue University) told of repeated experiences of being given the brush-off by journals and institutions when reporting suspect papers. For instance, he reported an article that had simply recycled a table from a previous paper on a different topic. The response was “We will look into it”. “What”, said David incredulously, “is there to look into?”. This is the concern – that there can be blatant evidence of malpractice within a paper, yet the complainant is ignored. In this case, nothing happened. There are honorable exceptions, but it seems shocking that serious and obvious errors in work are not dealt with in a prompt and professional manner.

At the same seminar, there was a searing presentation by Peter Wilmshurst, whose experiences of exposing medical fraud by powerful individuals and organisations have led him to be the subject of numerous libel complaints. Here are a few details of two of the cases he presented:

Paolo Macchiarini: Convicted in 2022 of causing bodily harm with an experimental transplant of a synthetic windpipe that he performed between 2011-2012. Wilmshurst noted that the descriptions of the experimental surgery in journals were incorrect. For a summary see this BMJ article. A 2008 paper by Macchiarini and colleagues is still published in the Lancet, despite demands for it to be retracted.

Don Poldermans: An eminent cardiologist who conducted a series of studies on perioperative betablockers, leading them to be recommended in guidelines from the European Society of Cardiology, whose task force he chaired. A meta-analysis challenged that conclusion, showing mortality increased; an investigation found that work by Poldermans had serious integrity problems, and he was fired. Nevertheless, the papers have not been retracted. Wilmshurst estimated that thousands of deaths would have resulted from physicians following the guidelines recommending betablockers.

The week before the Liverpool meeting, there was a session on Correcting the Record at AIMOS2022. The four speakers, John Loadsman (anaesthesiology), Ben Mol (Obstetrics and Gynecology), Lisa Parker (Oncology) and Jana Christopher (image integrity) covered the topic from a range of different angles, but in every single talk, the message came through loud and clear: it’s not enough to flag up cases of fraud – you have to then get someone to act on them, and that is far more difficult than it should be.

And then on the same day as the Liverpool meeting, Le Monde ran a piece about a researcher whose body of work contained numerous problems: the same graphs were used across different articles that purported to show different experiments, and other figures had signs of manipulation. There was an investigation by the institution and by the funder, Centre National de la Recherche Scientifique (CNRS), which concluded that there had been several breaches of scientific integrity. However, it seems that the recommendation was simply that the papers should be “corrected”.

Why is scientific fraud not taken seriously?

There are several factors that conspire to get scientific fraud brushed under the carpet.

1. Accusations of fraud may be unfounded. In science, as in politics, there may be individuals or organisations who target people unfairly – either for personal reasons, or because they don’t like their message. Furthermore, everyone makes mistakes and it would be dangerous to vilify researchers for honest errors. So it is vital to do due diligence and establish the facts. In practice, however, this typically means giving the accused the benefit of the doubt, even when the evidence of misconduct is strong. While it is not always easy to demonstrate intent, there are many cases, such as those noted above, where a pattern of repeated transgressions is evident in published papers – and yet nothing is done.

2.

Conflict of interest. Institutions may be

reluctant to accept that someone is fraudulent if that person occupies a

high-ranking role in the organisation, especially if they bring in grant income.

Worries about reputational risk also create conflict of interest. The Printeger project

is a set of case studies of individual research misconduct cases, which

illustrates just how inconsistently these are handled in different countries,

especially with regard to transparency vs confidentiality of process. It

concluded “The reflex of research organisations to immediately contain and

preferably minimise misconduct

cases is remarkable”.

3. Passing the buck. Publishers may be reluctant to retract papers unless there is an institutional finding of misconduct, even if there is clear evidence that the published work is wrong. I discussed this here. My view is that leaving flawed research in the public record is analogous to a store selling poisoned cookies to customers – you have a responsibility to correct the record as soon as possible when the evidence is clear to avoid harm to consumers. Funders might be expected to also play a role in correcting the record when research they have funded is shown to be flawed. Where public money is concerned, funders surely have a moral responsibility to ensure it is not wasted on fraudulent or sloppy research. Yet in her introduction to the Liverpool seminar, Patricia Murray noted that the new UK Committee on Research Integrity (CORI) does not regard investigation of research misconduct as within its purview.

4. Concerns about litigation. Organisations often have concerns that they will be sued if they make investigations of misconduct public, even if they are confident that misconduct occurred. These concerns are justified, as can be seen from the lawsuits that most of the sleuths who spoke at AIMOS and Liverpool have been subjected to. My impression is that, provided there is clear evidence of misconduct, the fraudsters typically lose libel actions, but I’d be interested in more information on that point.

Consequences when misconduct goes unpunished

The lack of consequences for misconduct has many corrosive impacts on society.

1. Political and scientific institutions can only operate properly if there is trust. If lack of integrity is seen to be rewarded, this erodes public confidence.

2. People depend on us getting things right. We are confronting major challenges to health and to our environment. If we can’t trust researchers to be honest, then we all suffer as scientific progress stalls. Over-hyped findings that make it into the literature can lead subsequent generations of researchers to waste time pursuing false leads. Ultimately, people are harmed if we don’t fix fraud.

3. Misconduct leads to waste of resources. It is depressing to think of all the research that could have been supported by the funds that have been spent on fraudulent studies.

4. People engage in misconduct because in a competitive system, it brings them personal benefits, in terms of prestige, tenure, power and salary. If the fraudsters are not tackled, they end up in positions of power, where they will perpetuate a corrupt system; it is not in their interests to promote those who might challenge them.

5. The new generation entering the profession will become cynical if they see that one needs to behave corruptly in order to succeed. They are left with the stark choice of joining in the corruption or leaving the field.

What can be done?

There’s no single solution, but I think there are several actions that are needed to help clean up the mess.

1. Appreciate the scale of the problem.

When fraud is talked about in scientific circles, you typically get the response that “fraud is rare” and “science is self-correcting”. A hole has been blown in the first assumption by the emergence of industrial-scale fraud in the form of academic paper-mills . The large publishers are now worried enough about this to be taking concerted action to detect papermill activity, and some of them have engaged in mass retractions of fraudulent work (see, e.g. the case of IEEE retractions here). Yet, I have documented on PubPeer numerous new papermill articles in Hindawi special issues appearing since September of this year, when the publisher announced it would be engaging in retraction of 500 papers. It’s as if the publisher is trying to clean up with a mop while a fire-hose is spewing out fraudulent content. This kind of fraud is different from that reported by Wilmshurst, but it illustrates just how slow the business of correcting the scientific record can be – even when the evidence for fraud is unambiguous.

|

| Publishers trying to mop up papermill outputs |

Yes, self-correction will ultimately happen in science, when people find they cannot replicate the flawed research on which they try to build. But the time-scale for such self-correction is often far longer than it needs to be. We have to understand just how much waste of time and money is caused by reliance on a passive, natural evolution of self-correction, rather than a more proactive system to root out fraud.

2. Full transparency

There’s been a fair bit of debate about open data, and now it is recognised that we also need open code (scripts to generate figures etc.) to properly evaluate results. I would go further, though, and say we also need open peer review. This need not mean that the peer reviewer is identified, but just that their report is available for others to read. I have found open peer reviews very useful in identifying papermill products.

3. Develop shared standards

Organisations such as the Committee on Publication Ethics (COPE) give recommendations for editors about how to respond when an accusation of misconduct occurs. Although this looks like a start in specifying standards to which reputable journals should adhere, several speakers at the AIMOS meeting suggested that COPE guidelines were not suited for dealing with papermills and could actually delay and obfuscate investigations. Furthermore, COPE has no regulatory power and publishers are under no obligation to follow the guidelines (even if they state they will do so).

4. National bodies for promoting scientific integrity

The Printeger project (cited above) noted that “A typical reaction of a research organisation facing unfamiliar research misconduct without appropriate procedures is to set up ad hoc investigative committees, usually consisting of in-house senior researchers…. Generally, this does not go well.”

In response to some high-profile cases that did not go well, some countries have set up national bodies for promoting scientific integrity. These are growing in number, but those who report cases to them often complain that they are not much help when fraud is discovered – sometimes this is because they lack the funding to defend a legal challenge. But, as with shared standards, this is at least a start, and they may help gather data on the scale and nature of the problem.

5. Transparent discussion of breaches of research integrity

Perhaps the most effective way of persuading institutions, publishers and funders to act is by publicising when they have failed to respond adequately to complaints. David Sanders described a case where journals and institutions took no action despite multiple examples of image manipulation and plagiarism from one lab. He only got a response when the case was featured in the New York Times.

Nevertheless, as the Printeger project

noted, relying on the media to highlight fraud is far from ideal – there can a

tendency to sensationalise and simplify the story, with potential for disproportionate

damage to both accused and whistleblowers. If we had trustworthy and official channels to report suspected research misconduct, then whistleblowers would be less likely to seek publicity through other means.

6. Protect whistleblowers

In her introduction to the Liverpool Research Integrity seminar, Patricia Murray noted the lack of consistency in institutional guidelines on research integrity. In some cases, the approach to whistleblowers seemed hostile, with the guidelines emphasising that they would be guilty of misconduct if they were found to have made frivolous, vexatious and/or malicious allegations. This, of course, is fair enough, but it needs to be countered by recommendations that allow for whistleblowers who are none of these things, who are doing the institution a service by casting light on serious problems. Indeed, Prof Murray noted that in her institution, failure to report an incident that gives reasonable suspicion of research misconduct is itself regarded as misconduct. At present, whistleblowers are often treated as nuisances or cranks who need to be shut down. As was evident from the cases of both Sanders and Wilmshurst, they are at risk of litigation, and careers may be put in jeopardy if they challenge senior figures.

7. Changing the incentive structure in science

It’s well-appreciated that if you really want to stop a problem, you should understand what causes it and stop it at source. People do fraudulent research because the potential benefits are large and the costs seem negligible. We can change that balance by, on the one hand having serious and public sanctions for those who commit fraud, and on the other hand, rewarding scientists who emphasise integrity, transparency and accuracy in their work, rather than those that get flashy, eyecatching results.

I'm developing my ideas on this topic and I welcome thoughts on these suggestions. Comments are moderated and so do not appear immediately, but I will post any that are on topic and constructive.

Update 17th December 2022

Jennifer Byrne suggested one further recommendation, as follows:

To change the incentive structure in scientific publishing. Journals are presently rewarded for publishing, as publishing drives both income (through subscriptions and/or open access charges) and the journal impact factor. In contrast, journals and publishers do not earn income and are not otherwise rewarded for correcting the literature that they publish. This means that the (seemingly rare) journals that work hard to correct, flag and retract erroneous papers are rewarded identically to journals that appear to do very little. Proactive journals appear to represent a minority, but while there are no incentives for journals to take a proactive approach to published errors and misinformation, it should not be surprising that few journals join their efforts. Until publication and correction are recognized as two sides of the same coin, and valued as such, it seems inevitable that we will see a continued drive towards publishing more and correcting very little, or continuing to value publication quantity over quality.

Bibliography

I'll also add here additional resources. I'm certainly not the first to have made the points in this post, and it may be useful to have other articles gathered together in one place.

Besançon, L., Bik, E., Heathers, J., & Meyerowitz-Katz,

G. (2022). Correction of scientific literature: Too little, too late! PLOS

Biology, 20(3), e3001572. https://doi.org/10.1371/journal.pbio.3001572

Byrne, J. A., Park, Y., Richardson, R. A. K., Pathmendra,

P., Sun, M., & Stoeger, T. (2022). Protection of the human gene research

literature from contract cheating organizations known as research paper mills.

Nucleic Acids Research, gkac1139. https://doi.org/10.1093/nar/gkac1139

Christian, K., Larkins, J., & Doran, M. R. (2022). The

Australian academic STEMM workplace post-COVID: a picture of disarray.

BioRxiv. https://doi.org/10.1101/2022.12.06.519378

Lévy, R. (2022, December 15). Is it somebody else’s problem

to correct the scientific literature? Rapha-z-Lab. https://raphazlab.wordpress.com/2022/12/15/is-it-somebody-elses-problem-to-correct-the-scientific-literature/

Research misconduct: Theory & Pratico – For Better

Science. (n.d.). Retrieved 17 December 2022, from https://forbetterscience.com/2022/08/31/research-misconduct-theory-pratico/

Star marine ecologist committed misconduct, university says. (n.d.). Retrieved 17 December 2022, from https://www.science.org/content/article/star-marine-ecologist-committed-misconduct-university-says

Additions on 18th December: Yet more relevant stuff coming to my attention!

Naudet, Florian (2022) Lecture: Busting two zombie trials in a post-COVID world.

Wilmshurst, Peter (2022) Blog: Has COPE membership become a way for unprincipled journals to buy a fake badge of integrity?

*Addition on 20th December

Liverpool Medical Institution seminar on Research Integrity: The introduction by Patricia Murray, talk by Peter Wilmshurt, and Q&A are now available on Youtube.

And finally....

A couple of sobering thoughts:

Alexander Trevelyan on Twitter noted a great quote from the anonymous @mumumouse (author

of Research misconduct blogpost above): “To imagine what it’s like to be

a whistleblower in the science community, imagine you are trying to report a

Ponzi scheme, but instead of receiving help you are told, nonchalantly, to call

Bernie Madoff, if you wish."

Peter Wilmshurst started his talk by relaying a conversation with Patricia Murray in the run-up to his talk. He said he planned to talk about the 3 Fs, fabrication, falsification and honesty.

To which Patricia replied, “There

is no F in honesty”.

Thank you for drawing attention this. In politics, this lack of consequences has drained me of any energy I had to be an activist — there seems no point in fighting against people who can walk away from anything.

ReplyDeleteIn science, I think that lack of activity in response to uncovered wrongdoing often has a more benign (but still not acceptable) cause: the work of dealing with it is unrewarding. As a handling editor, I could either go through all the processes of retracting a paper, or I could use the same time and effort to shepherd three or four more through peer-review — or indeed write a new paper of my own. I get why unrewarded hard work lacks appeal, and I'm not sure what can be done about it.

I guess it depends on the subject matter, but I hope I have made the case that retraction of the dodgy stuff is important - especially if others would try to build on it, but even when it is less important. There is a big issue with bad actors getting appointed and promoted on the basis of fraudulent work and then corrupting the whole system

DeleteOh, I very much agree! I'm not for a moment arguing that it's OK for these issues to go unaddressed; only saying that I understand why it happens. I guess we need to somehow change the incentives. But how?

Delete"...the new UK Committee on Research Integrity (CORI) does not regard investigation of research misconduct as within its purview"... well, of course not. The mere existence of CORI will stamp out research misconduct, so obviously no investigation will be needed. Duh.

ReplyDeleteAs someone who exclusively worked in fundamental sciences (no money to be made), I see the problem of the large grey zone between obvious fraud and sloppy science; which makes it near-impossible to name fraud as such. The last excuse could always be: "sorry, we miscalculated".

ReplyDeleteI found that enforcing points 2 and 3 are most crucial: full transparency should be obligatory, open data a must unless it clashes with higher ethical concerns (protection of sites and people). It's particularly intolerable that public-hand financed research is held behind curtains to make re-analyses impossible (an example, me trying to see what Elsevier means with: “Data not available/will be provided on request”). One also may question whether industry-paid research should be admissable to scientific journals at all.

Regarding point 3, we need to start to hold journals, their editorial boards, responsible for violating COPE guidelines, which are indeed under the current protocols, rather cosmetic (another example: Editors who “entertain” but are not “answerable”)

One thing that may be missing in the list are incentives for post-review. The only current acknowledged venue is the "comment to" option, but more often than not the journals just let one run into the wall. The most unsettling story, I ever heard of from colleagues, was of an Elsevier journal (Palaeo^3) keeping the comment to a fraudulent paper (no data documentation, misrepresented data basis, a method known to be highly problematic, getting new spectacular results, being fundamentally biased) for long enough under review, so, the editor could inform the critics that their comment would now be ready to publish but cannot be, because the journal has a strict guideline of six months for accepting comments.

Thanks - agree re post-publication peer review needing to be recognised

DeleteI work as a research integrity officer at a university. There are many reasons why we leave serious misconduct unaddressed (without being corrupt ourselves). 1. Bad processes that tie us up in paperwork and make it hard for us to actually do anything. 2. Bad management that gets hung up on things that slow us down. 3. Bad complaints. I have a case right now that is completely baseless. I keep trying to find evidence to support the complaint but I can’t. And if I dismiss the complaint then I’ll get written up as one of the bad guys in these types of blog. But it’s all time taken away from serious complaints with significant evidence.

ReplyDeleteThere’s more to it of course. I feel like I could write a book about how bad our systems are.

Thanks for your comment. It's really important to have this perspective, because I know some people just don't see the other side of the story with "bad faith" complaints. Some are from people who are just obsessed in a delusional way, and some come from organised groups who try to "take down" scientists who do research that doesn't fit their agenda. It does make things complicated, and can't be ignored. But meanwhile, as you note, the true fraudsters don't get dealt with properly.

DeleteYou say you cannot substantiate the complaint. Then indeed go ahead and dismiss it. Why being afraid of accusations in "these types of blogs"? Especially when exactly the same can be thrown at you for your inaction?

DeleteIf your accuser is right, admit your fault, correct your actions and improve for the future, alternatively, just resign. If your accuser is wrong, no harm is done to you.

The worst you can do is sitting on the case forever.

Your comment illustrates exactly what I’m talking about. If I dismiss the complaint, the accuser will be angry and appeal, so I need to ensure my work is watertight before dismissing it. But making my work watertight takes time, so commenters like you will place the blame on me for taking the time to dot my i’s and cross my t’s.

DeleteAnd in case you hadn’t noticed, most of my comment was criticising the systems and management I work under. I don’t write the rules or call the shots.

Since the 2013 update to the Declaration of Helsinki there's been a clear global ethical obligation to pre-register clinical trials and make their results public. There are dozens of studies that show widespread violations of these rules, often with accompanying datasets that list all "offending" trials. And there's broad consensus in the scientific community that failing to pre-register and report results causes significant harm to patients.

ReplyDeleteNonetheless, AFAIK, since 2013 there has so far been only one instance in which an ethics complaint has been filed over such a violation (by the TranspariMED campaign, which I run):

https://www.transparimed.org/single-post/transparimed-files-ethics-complaint-over-unreported-cancer-trial-result

Why only one formal complaint? Three reasons I can think of.

First, academic medical research is a small world: people know each other and generally get along well. People are comfortable complaining loudly about the problem until they get blue in the face, but nobody wants to name names, let alone cause problems for a peer. Even when funders conduct audits of their portfolios, they do not publish line-by-line data identifying violators. This friendly high-trust environment has lots of upsides (see: excellent UK Covid research collaboration across institutions) but does not incentivise whistleblowing.

Second, let's be honest, professors are the first class passengers on the academic ship, and ECRs are working towards moving up to those cabins on the top deck in future. When in history have the first class passengers ever rocked a boat?

Finally, it's widely - and correctly - seen as a systemic problem. These failures do not happen in institutions that have strong systems, because strong systems prevent such failures from happening in the first place. TranspariMED could use existing public data to file hundreds of legitimate and conclusively documented ethics complaints literally within a week. We haven't done this so far because what we want is for institutions to get their acts together, rather than framing this as an issue of individual responsibility. (Our single ethics complaint to date made that very clear, see link above.)

I'd love to hear what other people think of TranspariMED's current approach. Should we or should we not be filing ethics complaints en masse?

the new UK Committee on Research Integrity (CORI) does not regard investigation of research misconduct as within its purview"... well, of course not. The mere existence of CORI will stamp out research misconduct, so obviously no investigation will be needed. Duh.

ReplyDelete