It’s been an interesting week in world politics, and I’ve been distracting myself by pondering the role of academic editors. The week kicked off with a rejection of a preprint written with co-author Anna Abalkina, who is an expert sleuth who tracks down academic paper mills – organisations that will sell you a fake publication in an academic journal. Our paper describes a paper mill that had placed six papers in the Journal of Community Psychology, a journal which celebrated its 50th anniversary in 2021. We had expected rejection, as we submitted the paper to the Journal of Community Psychology, as a kind of stress test to see whether the editor, Michael B. Blank, actually reads papers that he accepts for the journal. I had started to wonder, because you can read his decision letters on Publons, and they are identical for every article he accepts. I suspected he may be an instance of Editoris Machina, or automaton, one who just delegates editorial work to an underling, waits until reviewer reports converge on a recommendation, and then accepts or rejects accordingly without actually reading the paper. I was wrong, though. He did read our paper, and rejected it with the comment that it was a superficial analysis of six papers. We immediately posted it as a preprint and plan to publish it elsewhere.

Although I was quite amused by all of this, it has a serious side. As we note in our preprint, when paper mills succeed in breaching the defences of a journal, this is not a victimless crime. First, it gives competitive advantage to the authors who paid the paper mill – they do this in order to have a respectable-looking publication that will help their career. I used to think this was a minor benefit, but when you consider that the paper mills can also ensure that the papers they place are heavily cited, you start to realise that authors can edge ahead on conventional indicators of academic prestige, while their more honest peers trail behind. The second set of victims are those who publish in the journal in good faith. Once its reputation is damaged by the evidence that there is no quality control, then all papers appearing in the journal are tainted by association. The third set of victims are busy academics who are trying to read and integrate the literature, who can get tangled up in the weeds as they try to navigate between useful and useless information. And finally, we need to be concerned about the cynicism induces in the general public when they realise that for some authors and editors, the whole business of academic publishing is a game, which is won not by doing good science, but by paying someone to pretend you have done so.

Earlier this week I shared my thoughts on the importance of ensuring that we have some kind of quality control over journal editors. They are, after all, the gatekeepers of science. When I wrote my taxonomy of journal editors back in 2010, I was already concerned at the times I had to deal with editors who were lazy or superficial in their responses to authors. I had not experienced ‘hands off’ editors in the early days of my research career, and I wondered how far this was a systematic change over time, or whether it was related to subject area. In the 1970s and 1980s, I mostly published in journals that dealt with psychology and/or language, and the editors were almost always heavily engaged with the paper, adding their own comments and suggesting how reviewer comments might be addressed. That’s how I understood the job when I myself was an editor. But when I moved to publishing work in journals that were more biological (genetics, neuroscience) things seemed different, and it was not uncommon to find editors who really did nothing more than collate peer reviews.

The next change I experienced was when, as a Wellcome-funded researcher, I started to publish in Wellcome Open Research (WOR), which adopts a very different publication model, based on that initiated by F1000. In this model, there is no academic editor. Instead, the journal employs staff who check that the paper complies with rigorous criteria: the proposed peer reviewers much have a track record of publishing and be clear from conflict of interest. Data and other materials must be openly available so that the work can be reproduced. And the peer review is published. The work is listed on PubMed if and when peer reviewers agree that it meets a quality threshold: otherwise the work remains visible but with status shown as not approved by peer review.

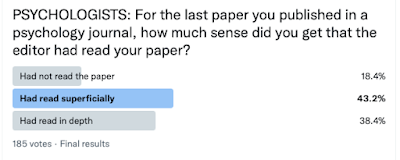

The F1000/WOR model shows that editors are not needed, but I generally prefer to publish in journals that do have academic editors – provided the editor is engaged and does their job properly. My papers have benefitted from input from a wise and experienced editor on many occasions. In a specialist journal, such an editor will also know who are the best reviewers – those who have the expertise to give a detailed and fair appraisal of the work. However, in the absence of an engaged editor, I prefer the F1000/WOR model, where at least everything is transparent. The worst of all possible worlds is when you have an editor who doesn’t do more than collate peer reviews, but where everything is hidden: the outside world cannot know who the editor was, how decisions were made, who did the reviews, and what they said. Sadly, this latter situation seems to be pretty common, especially in the more biological realms of science. To test my intuitions, I ran a little Twitter poll for different disciplines, asking, for instance:

| |||

| % respondents stating Not Read, Read Superfially, or Read in Depth |

Such polls of course have to be taken with a pinch of salt, as the respondents are self-selected, and the poll allows only very brief questions with no nuance. It is clear that within any one discipline, there is wide variability in editorial engagement. Nevertheless, I find it a matter of concern that in all areas, some respondents had experienced a journal editor who did not appear to have read the paper they had accepted, and in areas of biomedicine, neuroscience, and genetics, and also in mega journals, this was as high as around 25-33%

So my conclusion is that it is not surprising that we are seeing phenomena like paper mills, because the gatekeepers of the publication process are not doing their job. The solution would be either to change the culture for editors, or, where that is not feasible, to accept that we can do without editors. But if we go down that route, we should move to a model such as F1000 with much greater quality control over reviewers and COI, and much more openness and transparency.

As usual comments are welcome: if you have trouble getting past comment moderation, please email me.

No comments:

Post a Comment