Last week many words were written for Peer Review Week, so you might wonder whether there is anything left to say. I may have missed something, but I think I do have a novel take on this, namely to point out that some recent developments in automation may be making journals vulnerable to fake peer review.

Finding peer reviewers is notoriously difficult these days. Editors are confronted with a barrage of submissions, many outside their area. They can ask authors to recommend peer reviewers, but this raises concerns of malpractice, if authors recommend friends, or even individuals tied up with paper mills, who might write a positive review in return for payment.

One way forward is to harness the power of big data to identify researchers who have a track record of publishing in a given area. Many publishers now use such systems. This way a journal editor can select from a database of potential reviewers that is formed by identifying papers with some overlap to a given submission.

I have become increasingly concerned, however, that use of algorithmically-based systems might leave a journal vulnerable to fraudulent peer reviewers who have accumulated publications by using paper mills. I became interested in this when submitting work to Wellcome Open Research and F1000, where open peer review is used, but it is the author rather than an editor who selects reviewers. Clearly, with such a system, one needs to be careful to avoid malpractice, and strict criteria are imposed. As explained here, reviewers need to be:- Qualified: typically hold a doctorate (PhD/MD/MBBS or equivalent).

- Expert: have published at least three articles as lead author in a relevant topic, with at least one article having been published in the last five years.

- Impartial: No competing interests and no co-authorship or institutional overlap with current authors.

- Global: geographically diverse and from different institutions.

- Diverse: in terms of gender, geographic location and career stage

Unfortunately, now that we have paper mills, which allow authors, for a fee, to generate and publish a large number of fake papers, these criteria are inadequate. Consider the case of Mohammed Sahab Uddin, who features in this account in Retraction Watch. As far as I am aware, he does not have a doctorate*, but I suspect people would be unlikely to query the qualifications of someone who had 137 publications and an H-index of 37. By the criteria above, he would be welcomed as a reviewer from an underrepresented location. And indeed, he was frequently used as a reviewer: Leslie McIntosh, who unmasked Uddin’s deception, noted that before he wiped his Publons profile, he had been listed as a reviewer on 300 papers.

This is not an isolated case. We are only now beginning to get to grips with the scale of the problem of paper mills. There are undoubtedly many other cases of individuals who are treated as trusted reviewers on the back of fraudulent publications. Once in positions of influence, they can further distort the publication process. As I noted in last week's blogpost, open peer review offers a degree of defence against this kind of malpractice, as readers will at least be able to evaluate the peer review, but it is disturbing to consider how many dubious authors will have already found themselve promoted to positions of influence based on their apparently impressive track record of publishing, reviewing and even editing.

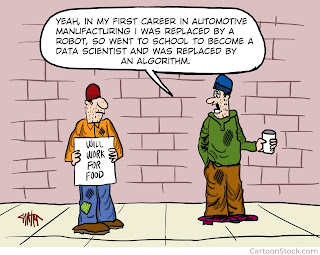

I started to think about this might interact with other moves to embrace artificial intelligence. A recent piece in Times Higher Education stated: “Research England has commissioned a study of whether artificial intelligence could be used to predict the quality of research outputs based on analysis of journal abstracts, in a move that could potentially remove the need for peer review from the Research Excellence Framework (REF).” This seems to me to be the natural endpoint of the move away from trusting the human brain in the publication process. We could end up with a system where algorithms write the papers, which are attributed to fake authors, peer reviewed by fake peer reviewers, and ultimately evaluated in the Research Excellence Framework by machines. Such a system is likely to be far more successful than mere mortals, as it will be able to rapidly and flexibly adapt to changing evaluation criteria. At that point, we will have dispensed with the need for human academics altogether and have reached peak academia.

*Correction 30/9/22: Leslie McIntosh tells me he does have a doctorate and was working on a postdoc.

As it is mentioned above regarding the required qualifications of the reviewers (e.g., Degree, on #1 above), another rude example is a guy named Afshin Davarpanah, who was an Associate Editor for "Energy Reports/ELSEVIER" and in some other journals too, and has reviewed many papers (also in Special Issues) while he was a master's degree holder from a very low level institution and low GPA, and just became a PhD student at a small university (https://www.aber.ac.uk/en/maths/staff-profiles/listing/profile/afd6/) as Aberystwyth Uni. in UK; and here you can find some of his dark records on PubPeer: https://pubpeer.com/search?q=Afshin+Davarpanah

ReplyDelete